Google handed one developer a $70,000 bill in August 2025. The fix — mandatory monthly spend caps rolled out April 1, 2026 — may have quietly handed OpenAI a structural advantage over every seed-to-Series-A startup that was planning to scale on cheap Gemini tokens. That's the story underneath the story.

The Spread That Gets Quoted and the One That Doesn't

The headline numbers are real. According to OpenAI's March 2026 pricing page, GPT-4o runs $2.50 per million input tokens and $10.00 per million output. Anthropic's Claude Opus 4.7, released April 16, 2026, costs $5.00 input and $25.00 output — the most expensive flagship rate among the three majors. Google's Gemini 2.5 Flash-Lite sits at $0.10 per million input tokens, per FindSkill.ai's April 2026 benchmarks. That's a 50x spread between the cheapest and most expensive input rates.

That spread is almost meaningless for any startup that has done the actual math.

All three providers now offer a 50% discount for asynchronous batch workloads. Anthropic and OpenAI both offer up to 90% savings through prompt caching, according to Finout's April 2026 analysis. A startup running Claude Opus 4.7 synchronously at $25.00 per million output tokens and restructuring those same calls as async batch jobs with aggressive prompt caching could land at an effective rate around $2.50 per million output — suddenly cheaper than GPT-4o at list price. Almost no early-stage team is systematically exploiting both levers simultaneously. The average organization now spends $1.2 million on AI-native applications in 2026, per GrowthNavigate's B2B SaaS Statistics report, with that spend growing 108% year over year. Leaving prompt-caching arbitrage on the table at that scale isn't a minor inefficiency — at a 40% gross margin target, it's the difference between hitting your number and not.

Three Pricing Strategies Wearing a Commodity Costume

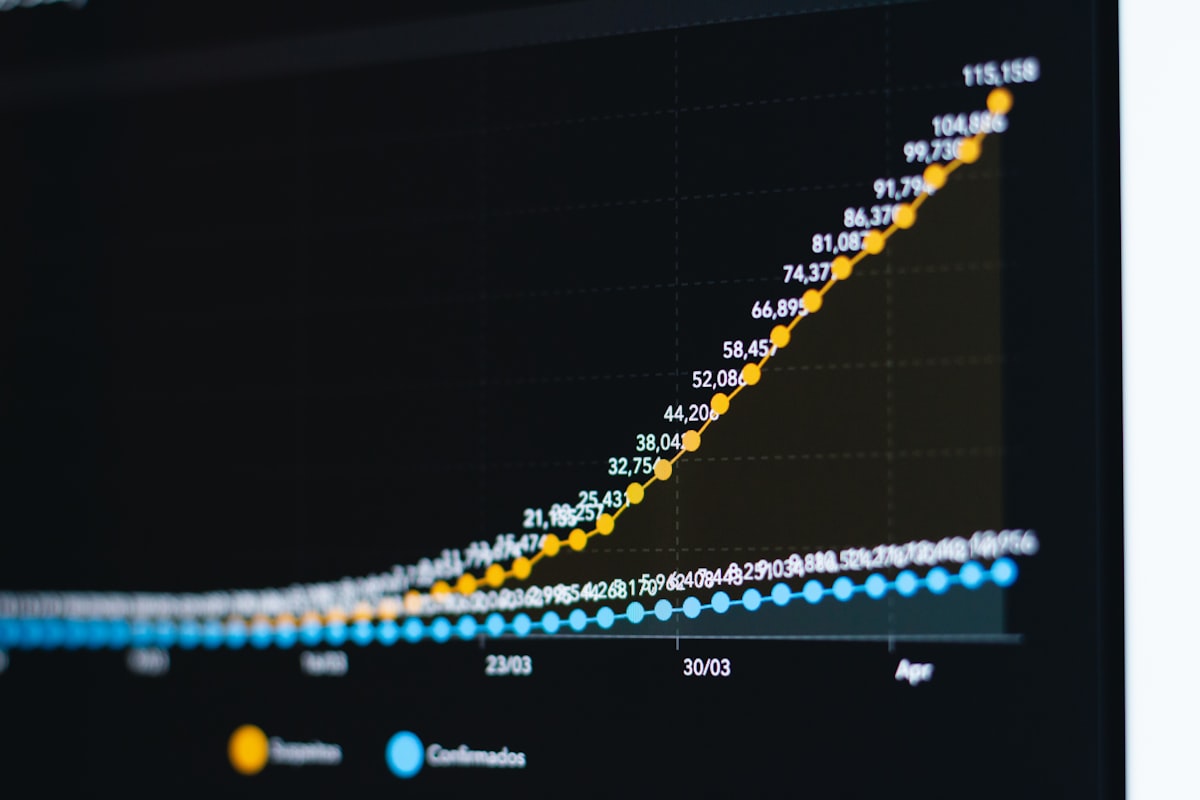

Stanford HAI documented a 280-fold drop in GPT-3.5-level query costs between November 2022 and October 2024 — from $20 per million tokens to $0.07. That deflationary curve didn't stop; it fragmented. What looks like a stable pricing menu today is three companies running three completely different monetization strategies.

OpenAI is selling an ecosystem. The Assistants API, the function-calling schema, the fine-tuning pipeline — every proprietary feature is a switching cost wearing a capability badge. Anthropic is selling a benchmark. Claude Opus 4.7 leads SWE-bench Verified at 87.6%, per Anthropic's April 2026 documentation. The $5.00/$25.00 rate is not a price — it's a filter. Anthropic is deliberately pricing out high-volume, low-margin workloads (document summarization, support bots, bulk classification) and concentrating on agentic coding pipelines and complex reasoning chains where a wrong answer costs more than the API call. If your engineers bill at $150 an hour, the math on paying 2x for fewer hallucinations closes faster than most founders admit.

Google is selling infrastructure at a loss and hoping you stay in GCP. The 1-million-token context windows across all Gemini models, the free tier, the $0.10 Flash-Lite rate — these are customer-acquisition economics, not margin-positive pricing. Which makes the April 2026 billing overhaul more interesting. Google's Tier 1 spend cap sits at $250 per month. A seed-stage Vancouver company running document ingestion or multimodal processing on Flash-Lite hits that cap at roughly 2.5 billion input tokens per month. Sounds like headroom until you're running any real pipeline volume — then you're filing a tier upgrade request and waiting. OpenAI has no equivalent hard cap.

What Canadian Compliance Does to the Ranking

Every US-focused API cost comparison misses the same line item. Under PIPEDA and the incoming obligations from federal Bill C-27 — the Artificial Intelligence and Data Act — a Vancouver startup processing Canadian user data through a US-hosted API endpoint is making a legal exposure decision, not just a cost decision. All three major providers charge a 10% premium for regional data-residency endpoints, per the analysis packs reviewed for this piece. That premium is not optional for any startup handling health records, financial data, or anything that qualifies as a high-impact AI system under C-27's impact-assessment framework.

Cohere, headquartered in Toronto with Canadian data infrastructure, exists precisely because this gap is real. The BC Tech Association has been flagging the data-residency cost to members for two years. The honest cost comparison for a regulated-sector startup in Vancouver — fintech, healthtech, legal AI — isn't OpenAI versus Anthropic versus Google at list price. It's those three with a 10% compliance surcharge applied to every endpoint call, which changes the ranking materially. A startup that benchmarked Google as 50x cheaper than Claude Opus 4.7 on input tokens is actually looking at a much smaller gap once regional processing and spend-cap friction enter the model.

This is also where the hyperscaler layer muddies the water. AWS Bedrock, Google Cloud, and Azure all resell Claude, Gemini, and OpenAI models via managed platforms, adding roughly 10% in regional premiums while competing for enterprise workloads through bundled credits. Those credits distort unit economics reporting to investors — a startup showing clean API cost numbers on a board slide may be running on credits that expire in Q3.

The Second-Order Effects Worth Modeling Now

The structural shifts most coverage isn't pricing in:

- Google's Tier 1 spend caps will accelerate mid-stage startup migration toward OpenAI Batch API at scale — the cap creates a ceiling exactly where growth-stage volume lives.

- Anthropic's SWE-bench leadership creates real pricing power specifically in developer-tool startups where output quality reduces QA costs downstream.

- Prompt-caching adoption will bifurcate the market within 18 months: cost-efficient operators running effective rates well below list price, and runway-burning laggards still paying full synchronous rates.

- Regulated-sector startups in BC face a hidden 10% API tax that doesn't appear in any published comparison, skewing true cost analysis away from headline token rates.

- Hyperscaler bundled credits will increasingly obscure true API costs, making unit economics harder to audit at the Series A stage.

According to Coherent Market Insights' 2026 projections, the global generative AI market sits at approximately $121 billion this year, growing at a 33.2% CAGR toward $900 billion by 2033. The foundation model API layer alone is estimated at roughly $30 billion in 2026, with OpenAI and Anthropic together accounting for $13 to $15 billion of that segment, per New Market Pitch's March 2026 analysis. Anthropic closed a $30 billion Series G at a $380 billion post-money valuation in February 2026, with annualized revenue of approximately $14 billion by early 2026. These are not companies competing on price. They are companies competing on lock-in.

The Contrarian Case for Ignoring All of This

A senior infrastructure architect who has run API cost optimization through three Vancouver SaaS exits — and who asked not to be named because they still advise two active portfolio companies — put it flatly: "By the time provider selection materially moves the needle on runway, you're either negotiating custom enterprise contracts that bear no resemblance to published pricing, or you're fine-tuning open-weight models on your own compute and paying nobody per token."

The point is sharper than it sounds. Founders obsessing over $0.10 versus $2.50 per million tokens at seed stage are optimizing a variable that represents maybe 8% of their burn — while ignoring the 40% sitting in engineering hours spent prompt-engineering around a model that was the wrong architectural choice from day one. US companies spent $37 billion on generative AI in 2025, tripling from $11.5 billion the prior year, per Deloitte data cited by AutoFaceless. Eighty-six percent of enterprises plan to increase AI budgets further in 2026. That money is not going to the startup that picked the cheapest token rate. It's going to the one that shipped.

The honest 2026 framework: use the cheapest model your best engineer thinks clearest in, exploit batch and caching discounts from day one, model the compliance surcharge if you're in a regulated vertical, and treat Google's spend caps as a scaling risk to underwrite before you build your architecture around Flash-Lite. Revisit the provider decision when your monthly API bill actually appears on a board slide — not before.