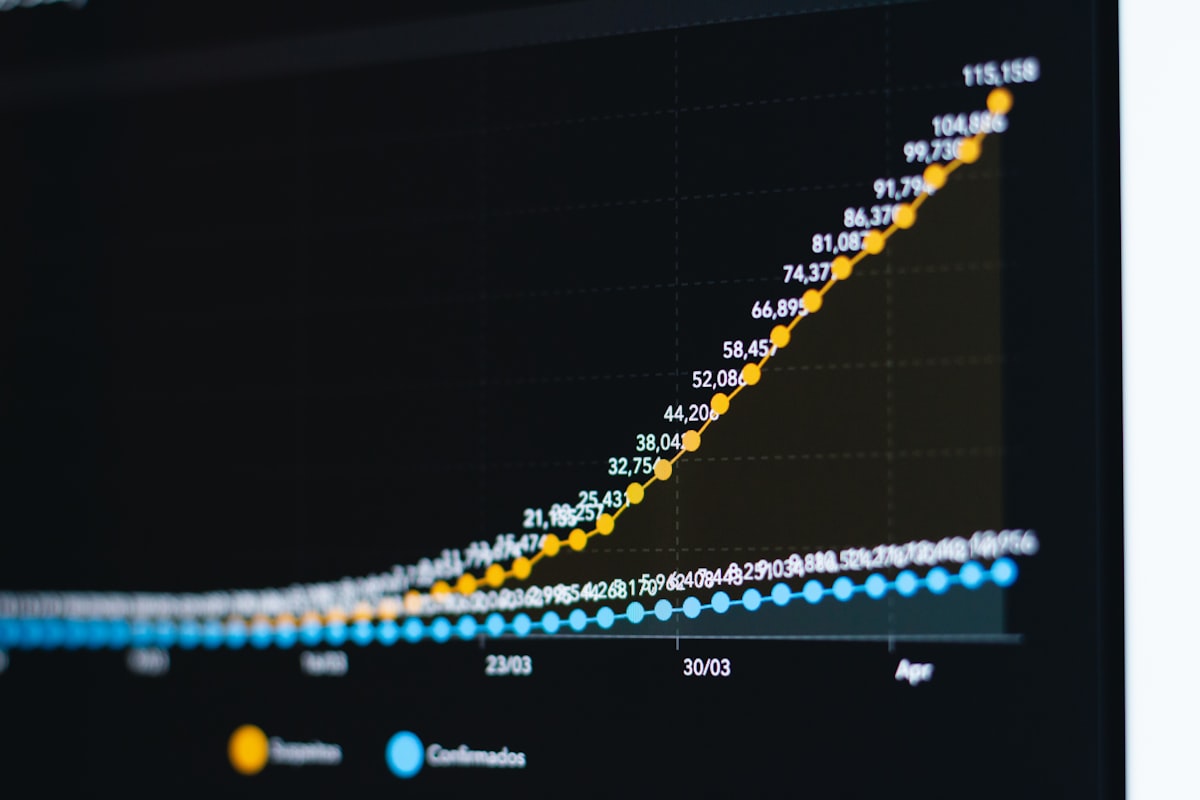

The per-token price of running a GPT-4-equivalent model has fallen from $20 to $0.40 per million tokens since late 2022, according to GPUnex's February 2026 analysis. That is a 1,000× reduction in three years. It is also, for a growing number of AI startups, completely irrelevant to their actual cost problem.

The Jevons Trap Nobody Put in the Pitch Deck

Here is the math that is not appearing in Series A decks: if your product costs $0.40 per million tokens today but your users are sending ten times as many queries as you modeled, and your feature set now chains four model calls per interaction, your inference bill is higher in absolute dollars than it would have been in 2023 at the old price. That is not a hypothetical. That is what Gartner flagged in March 2026 when it warned that token consumption is growing faster than per-token prices fall — meaning total inference spend keeps climbing even as the unit cost collapses.

By early 2026, inference represents 55% of all AI infrastructure spending, up from 33% in 2023, according to ByteIota's February 2026 analysis. ByteIota projects that share hits 75–80% of all AI compute by 2030. The inference market itself is projected to exceed $50 billion in 2026 — growing faster than the training compute market for the first time. The economic term for what is happening is induced demand. Lower prices expand consumption faster than they reduce total spend. Anyone who watched S3 storage costs drop 80% between 2010 and 2020 while enterprise storage bills quietly grew knows exactly how this plays out. AI inference is running the same playbook at a compressed timescale.

The companies celebrating cheap tokens are, in many cases, accelerating toward a higher absolute cost ceiling. Not escaping one.

What the CloudZero Average Is Hiding

CloudZero's State of AI Costs report found average monthly enterprise AI spend reached $62,964 in 2024. That number is a blended figure, and the distribution underneath it is brutal.

Early-stage AI startups typically spend $2,000–$8,000 per month in prototype phase, scaling to $10,000–$30,000 per month once they hit production, per GMI Cloud's 2026 pricing guide. The founders who see the prototype number convince themselves that cost discipline will hold at scale. It does not. The moment real user load arrives, you are at $10,000–$30,000 a month before you have solved a single optimization problem — and most seed-stage teams have not hired anyone who knows how to solve those problems.

GPU compute consuming 40–60% of a startup's technical budget in year one is not a forecast. It is a confession that the business model was never fully stress-tested. Only 51% of organizations in the CloudZero survey said they could confidently evaluate ROI on their AI spend. For the other half, the bills are arriving before the returns are visible.

For context on what the frontier looks like: Epoch AI estimates Anthropic spent $6.8 billion on compute alone in 2025, with compute representing 57–70% of total spending across leading AI firms. Anthropic's total 2025 spend is estimated at $9.7 billion. These are companies with billions in venture capital and enterprise contracts. The cost structure is not gentler for a 12-person team in Gastown.

The $10 Spread That Is Actually a Strategic Decision

The GPU rental market in 2026 is not a commodity. It is the single largest operating decision most AI startups will make, and most are making it by default.

According to GMI Cloud's April 2026 pricing guide, H100 GPU cloud rental ranges from $2.00 per GPU-hour on specialized providers to $12.29 per GPU-hour on Azure. AWS cut its H100 prices roughly 44% in June 2025, but even post-cut hyperscaler pricing sits well above the specialized market floor. That 6× spread on your largest operating line is not a procurement detail. It is a business model variable.

The clearest illustration of what that spread means in practice: Midjourney's documented migration from NVIDIA A100 and H100 hardware to Google TPU v6e in Q2 2025 cut their monthly inference bill from $2.1 million to under $700,000 — $16.8 million in annualized savings, according to analysis from Introl and ByteIota. That is not an optimization. That is the difference between a business that works and one that doesn't.

A Vancouver Series A company running $25,000 a month in inference on Azure because it was the path of least resistance at prototype stage is leaving real money on the table every month while burning toward a down round. The on-premise alternative is not obviously better: NVIDIA H100 units run $30,000–$40,000 each, with an 8-GPU server system costing $200,000–$320,000, per GMI Cloud. Ownership only pencils out at sustained utilization above roughly 60–70% — a threshold almost no startup hits in its first two years. Which is why the rental market exists. Which is why the rental spread matters so much.

A senior engineer at a Vancouver-area AI infrastructure company, who asked not to be named because their firm advises clients on exactly these decisions, put it plainly: "We see teams that have been on Azure since their first proof of concept, paying four times what they need to, because migrating feels like a distraction. It is not a distraction. It is the company."

Second-Order Pressures That Are Already Landing

The cost structure is producing effects that go beyond the monthly bill:

- Startups are being forced to hire infrastructure engineers before product-market fit, compressing already thin seed runways.

- Inference optimization — prompt compression, response caching, model routing, fine-tuned smaller models for narrow tasks — is landing on product roadmaps and slowing feature velocity for teams that cannot afford both simultaneously.

- Startups with proprietary inference efficiency are becoming acquisition targets valued for their cost stack, not their product.

- Price competition is beginning to consolidate the GPU rental market around two or three dominant providers, eliminating the smaller specialized clouds that currently offer the lowest rates.

The SaaS parallel is instructive. The companies that survived the 2015–2018 AWS margin compression were the ones that hired a platform engineering function early and treated infrastructure cost as a first-class product metric. Most Vancouver AI startups are 12 to 18 months behind where they need to be on this.

Canada's $925 Million Answer to a Question Startups Are Actually Asking

Canada's Budget 2025 committed C$925.6 million over five years toward sovereign AI infrastructure, building on a C$2 billion Sovereign AI Compute Strategy launched in 2024, according to Bennett Jones's December 2025 analysis. The goal is expanding domestic GPU compute access for Canadian researchers and companies.

Divide C$925.6 million by five years. Then by the number of Canadian AI companies that will exist by 2028. Then ask what the actual access mechanism looks like for a 12-person startup that needs H100 reservations at a price that changes their unit economics. The answer is not yet yes.

The policy vacuum compounds the problem. Canada's proposed Artificial Intelligence and Data Act died on the order paper in January 2025 when Parliament was prorogued, leaving no binding federal AI legislation in force as of 2026, per MLT Aikins's March 2026 analysis. British Columbia has introduced a Policy on the use of generative AI and a Digital Code of Practice for public sector employees, but neither addresses the liability exposure that comes with scaling inference workloads involving personal data in a regulatory grey zone. Founders are making architecture decisions — where data is processed, which models handle what queries — without a clear compliance framework to build against.

Hyperscaler capex hit $600 billion in 2026, a 36% increase over 2025, with 75% tied directly to AI infrastructure, according to ByteIota's January 2026 figures. The incumbents are not waiting for policy clarity. The question is whether Canadian startups can build cost structures that survive long enough to matter before the consolidation that is already underway finishes.

There is a contrarian case. A veteran infrastructure investor will tell you that every major compute cost curve in history — storage, bandwidth, CPU cycles — eventually fell faster than consumption rose, and the startups that survived long enough hit enormous markets. On that view, the right move is to grow into your inference bill aggressively, capture users and data now, and let the cost curve bail you out. It is the same bet that worked for streaming companies that over-indexed on bandwidth costs in 2012.

It is also a bet that requires surviving the years between now and that crossover. And right now, in Vancouver, most seed-stage AI companies are not modeling what those years actually cost.